SWE Atlas - Test Writing

Evaluating an agent’s ability to write production-grade tests

TL;DR:

We are launching SWE Atlas - Test Writing, the second leaderboard in the SWE Atlas evaluation suite for coding agents.

Frontier models score <45% on the benchmark, which evaluates model’s test writing ability using multi-step professional-grade evaluation.

The best performing model on the benchmark is GPT-5.4 xhigh, which claims the top spot by writing precise, targeted tests after a deep and comprehensive codebase exploration, while also adhering to the codebase conventions and best practices.

Open models like GLM-5 and Kimi K2.5 significantly lag behind closed frontier models, and write an excessive number of tests unrelated to the scope of the prompt while missing key tests that are needed. This highlights new avenues for research to build strong open models for the ML community.

Overview

SWE Atlas is a benchmark for evaluating AI coding agents across a spectrum of professional software engineering tasks. Rather than measuring a single skill, SWE Atlas consists of three leaderboards that target distinct and complementary capabilities in the Software Development Cycle:

Codebase QnA - Understand complex codebases through runtime analysis and multi-file reasoning questions

Test Writing - Write meaningful production-grade tests for a given behavior in the repository.

Refactoring - Restructure code to improve performance & readability while preserving behavior.

We are releasing results for Test Writing, the second benchmark in the SWE Atlas suite. All the data and code to run the benchmark can be found in https://github.com/scaleapi/SWE-Atlas.

Test Writing consists of 90 high quality tasks that target a coding agent’s Test Writing ability.

The agent is given access to a code repository inside a Docker container with some crucial tests missing for an important workflow. These tasks are agentic by design - the prompts describe the workflow or behavior to be tested at a high level with just the relevant description. The agent needs to autonomously explore the codebase, identify what specific tests need to be written and where to place them, execute it and then submit. Tests are expected to cover the behaviors described in the prompt, and also limit its scope to test code relevant to the behavior, and write clean and maintainable code that upholds the conventions and best practices of the codebase.

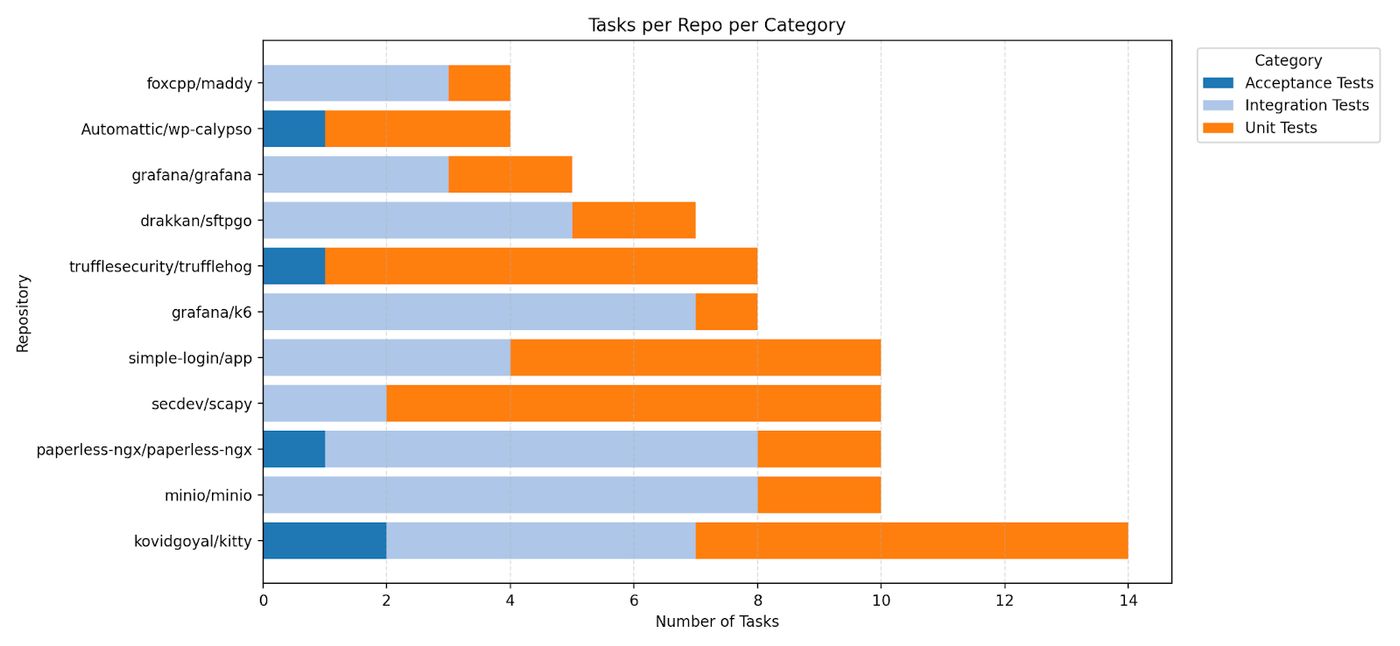

The benchmark consists of tasks drawn from 11 production repositories across 4 programming languages - Go, Python, C, and TypeScript. Top models score under 45%, with a substantial room for improvement.

Dataset Description

Repository. Repositories are selected from SWE-Bench Pro, and represent real-world software complexity: mail servers, terminal emulators, object storage systems, observability platforms, secret scanners, etc. These are large, actively maintained open-source codebases with non-trivial architectures. They are also contamination-resistant, using strong copyleft licenses (e.g., GPL)

Environment. For each repository, engineers build a reproducible Docker image pinned to a specific commit, with all dependencies pre-installed, such that the software can be built, run, and tested.

Prompts. Professional software engineers and technical experts with significant coding and agentic experience write problem statements that require multi-step reasoning across the codebase. Experts first spend time familiarizing themselves with each repository's functionality, implementation, and edge cases before authoring test writing tasks. We emphasize that task prompts be written in natural language to emulate how they interact with coding agents like Claude Code or Cursor, and are, by nature, underspecified.

Tasks consist of writing Unit Tests, Integration Tests and Acceptance Tests.

Unit Tests: Unit tests verify that individual, isolated pieces of code (like a single function or class) work correctly on their own.

Integration Tests: Integration tests check that multiple pieces of code or separate services function correctly when connected and communicating with each other.

Acceptance Tests: Acceptance tests evaluate the fully assembled application to ensure it meets the original business requirements and works as expected from an end-user's perspective.

We illustrate an example task below from the grafana/k6 repository for Integration Test:

I need five integration tests for the document exporter in paperless-ng. The export functionality works but we're missing some key tests. The test class with setUp and helpers is already in place. Let's reuse that Write 1 integration test for each scenario: 1. A full export and re-import round-trip that validates the manifest structure, exported file integrity, and that data survives a clean database wipe and re-import with no sanity check issues. 2. An incremental export test verifying that unchanged files are skipped on re-export, but modifying a file's timestamp triggers a re-copy. 3. An incremental export test using checksum comparison mode, verifying that a document with an altered checksum gets re-exported while others are skipped. 4. A test for exporting after a document is deleted from the database, covering both default behavior and the delete cleanup option for orphaned files. 5. A test for export with a custom filename format where a document's metadata changes between exports, verifying file relocation and old directory cleanup.

Each prompt is also supplied with a standardised run script, instructing it to ensure that its tests can be run using it. The verifier uses the same run script to run the mutation test during evaluation.

Each response is evaluated in 3 steps:

1. Manifest check: An LLM judge (Claude Opus 4.5) checks if the manifest accurately lists all the changes made by the agent, given the agent’s manifest file and the submitted test patch.

Pass criteria: LLM judge grades it as a Pass.

Below is a sample manifest file created by GPT 5.4 xhigh (Codex)

<<TEST_MANIFEST>> - file: src/documents/tests/test_management_exporter.py tests: - TestExportImport.test_full_export_and_reimport_round_trip_survives_clean_wipe - TestExportImport.test_incremental_export_reuses_existing_files_until_source_mtime_changes - TestExportImport.test_incremental_export_compare_checksums_recopies_changed_content - TestExportImport.test_export_after_document_deletion_keeps_or_cleans_orphans - TestExportImport.test_export_with_filename_format_relocates_files_and_cleans_old_directory <<TEST_MANIFEST>>

2. Mutation test: During task creation, contributors also identify the relevant code patch in the codebase for the prompt. The agent’s tests listed in the manifest are then run and checked for passing (baseline pass). Then the relevant code for the task is removed (mutation) and the tests are re-run to check for failure (mutation fail).

Pass criteria: All tests listed in the manifest pass and all tests must fail after mutation i.e. no extraneous tests are added

3. Rubric grading: The agent’s test patch is then graded in detail using an LLM judge against a comprehensive rubric set, which consists of both must-have and nice-to-have rubrics, each receiving a binary pass or fail judgement.

Pass criteria: All must-have rubrics are graded as a Pass.

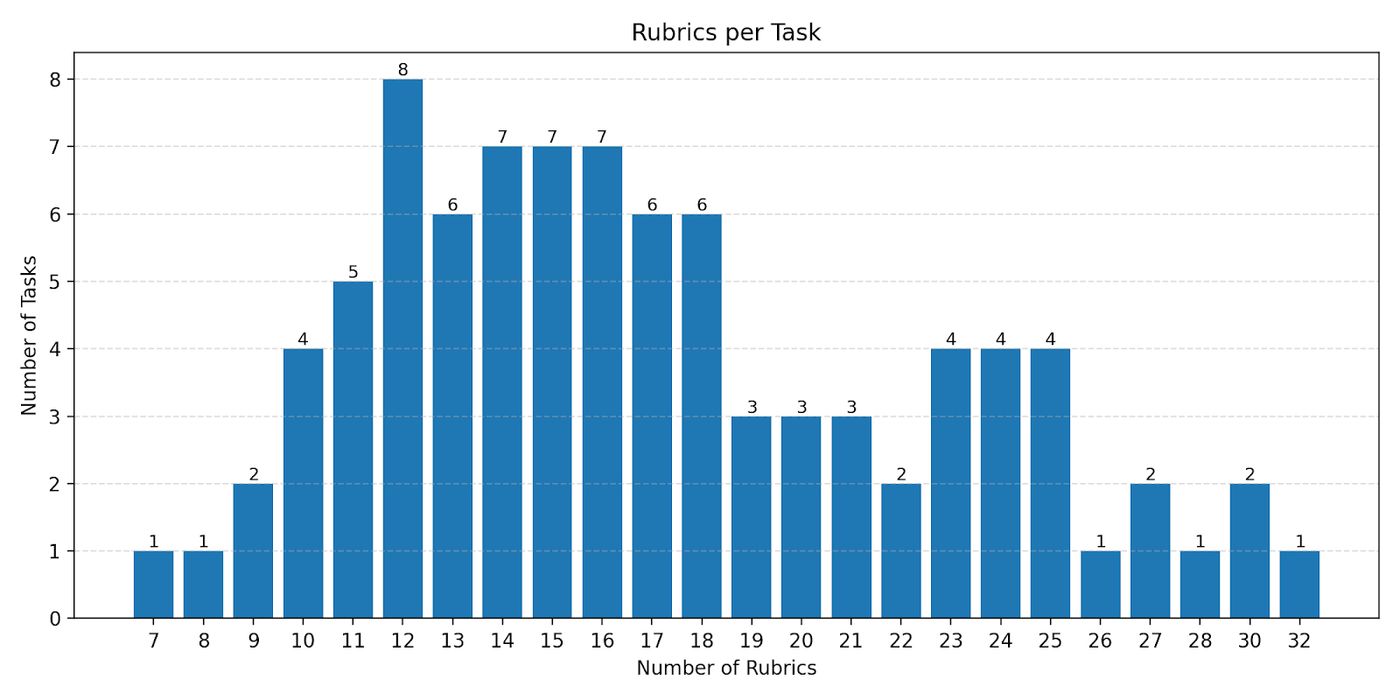

Rubric creation. For each task, human experts define a structured rubric of evaluation criteria. Each criterion checks for a specific, verifiable factual claim. Rubrics follow standard design principles: specific (with no or little room for multiple interpretations), atomic (testing one distinct aspect), self-containment (gradable without external knowledge).

Rubrics are categorized into 4 axes:

Test Comprehensiveness (mandatory) - Checks if the agent’s tests submitted are comprehensive and cover all the required cases.

Test Placement (nice-to-have) - Checks if the tests are placed in the right location in the codebase

Test suite conventions (nice-to-have) - Checks if the test follows the global codebase and language/framework conventions and best practices

Test bucket conventions (nice-to-have) - Checks if the test use follows good coding practices locally like reusing helper functions

We use mandatory rubrics (Test Comprehensiveness) to score and rank the models. The grading of the nice-to-have rubrics are used only for qualitative analysis.

Here is a subset of rubrics for the prompt shared above for illustration purpose:

test comprehensiveness: - title: "1.4: Tests that re-importing into a clean database restores all documents" - title: "1.8: Tests that modifying a file's timestamp triggers a re-copy on the next export" - title: "1.9: Tests that a document with an altered checksum is re-copied when checksum comparison mode is enabled" - title: "1.15: Tests that old directories are cleaned up after file relocation with the delete option" test placement: - title: "2.1: Places the full round-trip export and import test in documents/tests/test_management_exporter.py" - title: "2.5: Places the filename format relocation test in documents/tests/test_management_exporter.py" test suite conventions: - title: "3.1: All tests use Django's TestCase framework via the existing TestExportImport class" - title: "3.2: All tests follow the test_<name> naming pattern" test bucket conventions: - title: "4.1: Follows the pattern of copying sample document files into the media directory before exporting, for tests that perform exports" - title: "4.2: Follows the pattern of using the _do_export helper method to invoke the document exporter command"

Quality Assurance. We implement a detailed multistep pipeline to ensure that tasks are of the highest quality. Throughout the process, experts are supplemented with LLM based agentic evaluators that monitor data quality in the same task environment that the expert is working in.

During task creation, expert contributors ensure that each rubric is fair, factual and verifiable, and ties in to the codebase’s best practices and conventions. They also ensure that rubric grading is consistent when graded by multiple LLM judges, and that tasks are of sufficient difficulty.

Post-creation, all tasks undergo a human consensus review of their rubrics with other independent experts and our internal ML team, to check for over-prescriptive rubrics that are too closely tied to the reference implementation as well as rubrics that unfairly penalize responses from agents.

Experiments

Evaluation Metric: Task Resolve Rate

During evaluation, the agent operates inside a sandboxed Docker container with the target repository mounted. The agent has access to standard shell tools (bash, grep, find, etc.) and can build and run the software and test its implementation. The agent explores the repository, runs experiments, and produces a final test suite submission patch, as well as a manifest file that lists the tests it produced.

The primary metric is the Task Resolve Rate, and a task is considered resolved if it passes all three checks listed above (Manifest Check + Mutation Test + Rubrics)

Results

We ran a suite of frontier closed and open coding models on the benchmark, using Modal sandboxes using the Harbor Framework for agent evaluation. We observe that even frontier models that score high in issue resolution (e.g. >80% on SWE-Bench-Verified) score <45% on test writing tasks, highlighting the challenging nature of test writing

We evaluated models using the Mini-SWE-Agent harness to understand their capabilities to address the tasks via a common bash toolkit. We modified its system prompt and instance template to focus on test writing instead of issue resolution.

Additionally we evaluated commonly used coding clients like claude-code with Opus 4.6 and Sonnet 4.6 and Codex with GPT-5.3 Codex and GPT 5.4.

Each model was run with 3 trials, and we report the mean resolve rate across the trials.

Analysis

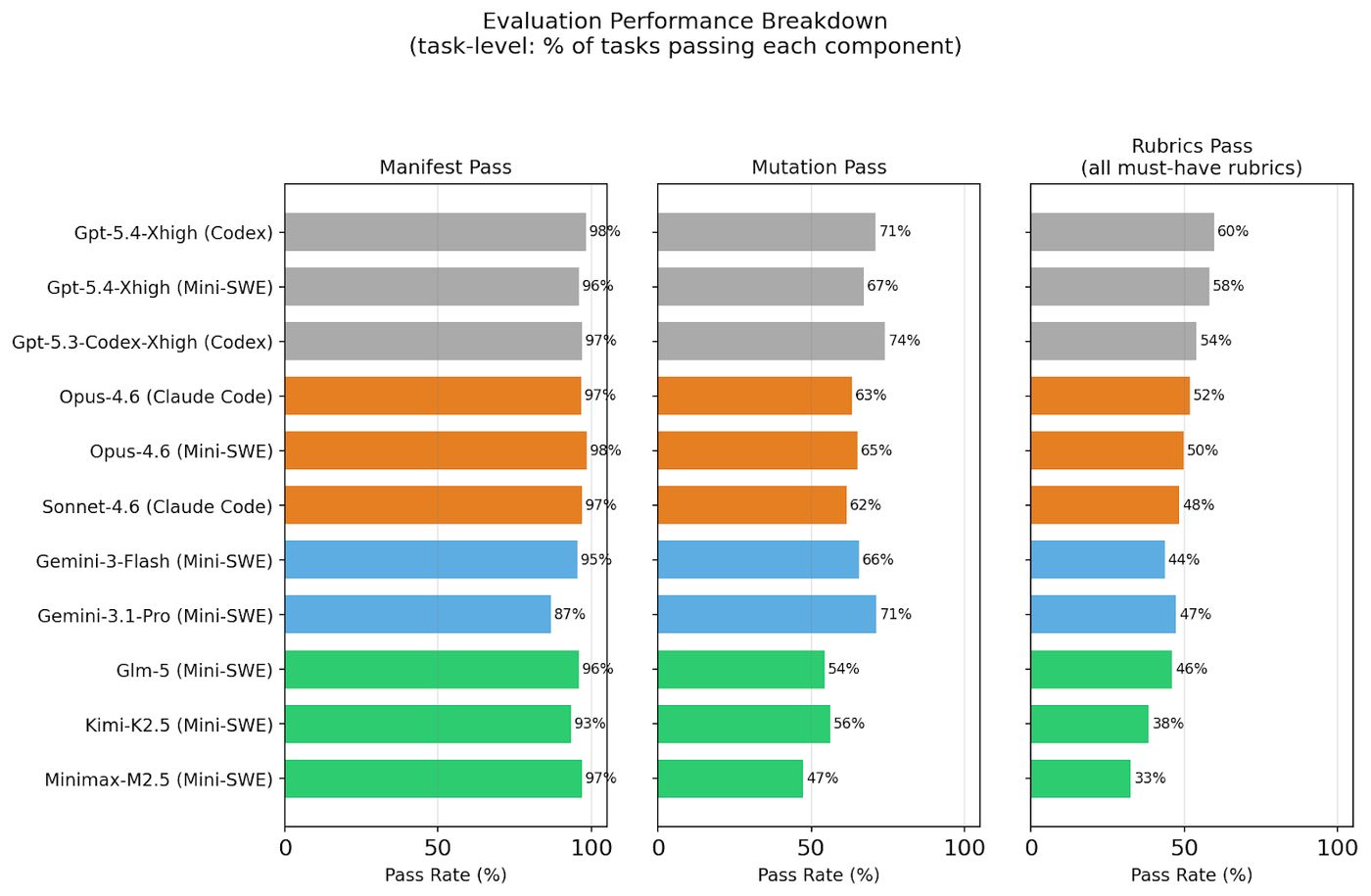

Failure mode breakdown

Since evaluation has 3 components, we break down a model’s success rate across them - manifest check, mutation check and rubric check.

1. Most models write very clear manifests that accurately describe the tests it wrote. Very few tasks fail manifest checks.

2. Models have a higher mutation fail rate – they sometimes write tests that are extraneous and do not test the behavior described in the prompt.

3. The biggest failure mode is rubrics – models often miss writing tests that are expected for the behavior described, thus failing one or more mandatory rubrics. This category truly differentiates frontier coding models from the rest of the pack.

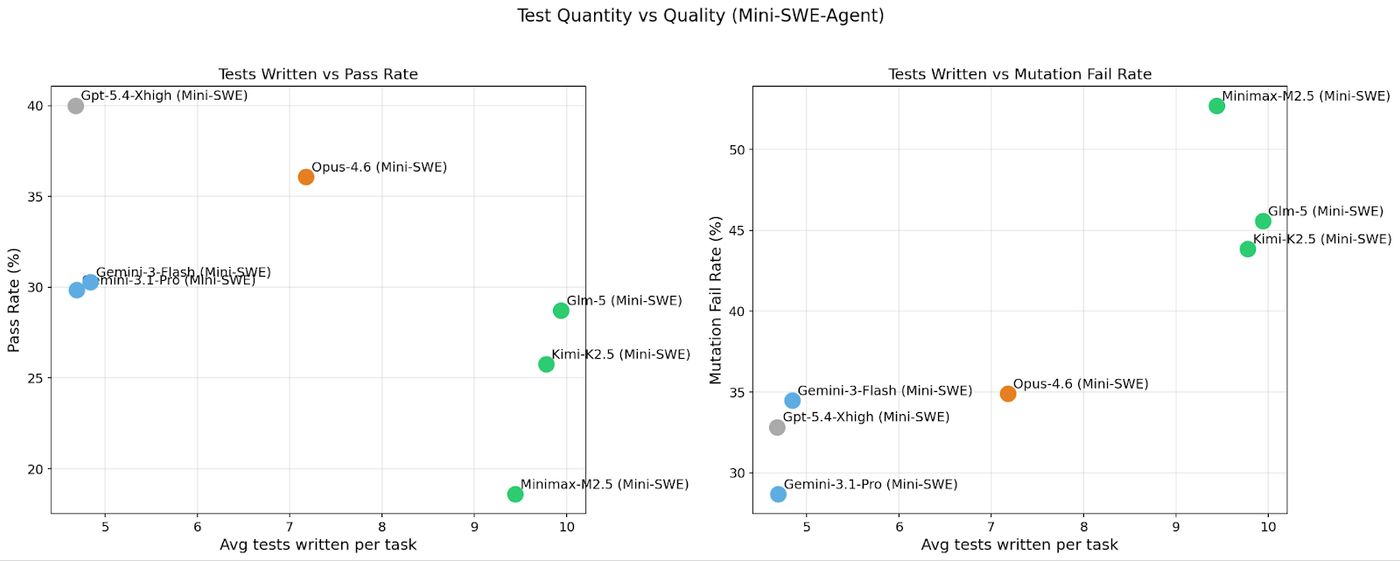

When we plot the average number of tests that the models write against the resolve rate and mutation failure rates, we see that writing a lot of tests doesn’t lead to a higher score on the benchmark. In fact, the leading model under a common scaffold, GPT 5.4, writes the least number of tests. The benchmark design demands targeted tests relevant to the tasks from the prompt, and they need to pass mutation tests against the relevant code for the prompt.

There is also a strong positive correlation between models that write a lot of tests, and their mutation failure rate. So the benchmark design encourages surgical test additions instead of spamming the codebase with unnecessary tests.

Rubric failure breakdown

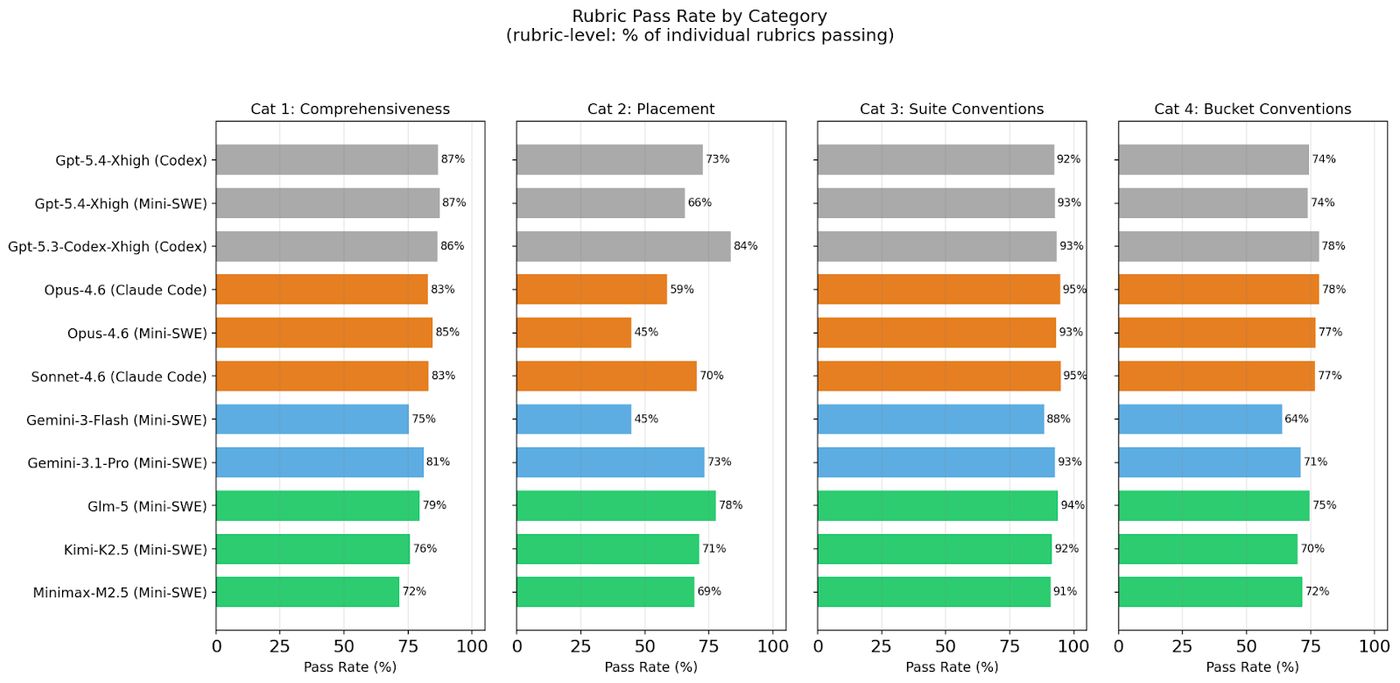

We breakdown several of these insights here, to help developers understand model behaviors better. The figure below breaks model success at the individual rubric level across tasks for each category.

Test Placement: This checks if the tests created by the agent are placed in a logical position in the codebase, along with related tests. This maintains codebase maintenance and consistency. Notably, GPT-5.4 and GPT-5.3 Codex series of models are noticeably better than Claude Opus and Sonnet at this, with a large score gap.

Notably, Claude Opus 4.6 consistently creates new test files instead of adding to existing ones. Two main patterns:

1. Function-specific new files: Ex: Creates a new test_add_header.py instead of adding to the existing test_email_utils.py. Opus names these files after the specific function being tested rather than the module grouping, where it logically belongs.

2. Wrong directory: Places tests in tests/dashboard/ when they belong in tests/handler/, or creates files in a completely different module.

Interestingly, the scaffold makes a lot of difference here, where Anthropic and OpenAI’s model scores being significantly better (+10% absolute difference) in test placement and logical consistency when they are run on their native scaffold instead of mini-swe-agent.

Test Suite Conventions: This checks if the agent uses the right conventions and best practices for the task’s language and testing framework, at the global repository level. Most models get this right, using the correct framework, test naming scheme, etc.

Test Bucket Conventions: This checks if the agent writes neat, maintainable code at the local file/test bucket level, by re-using the available helper functions, setup and teardown methods, etc. The leading models also perform slightly better than the rest, but still have plenty of room for improvement in high-quality test code.

Trajectory and answer analysis

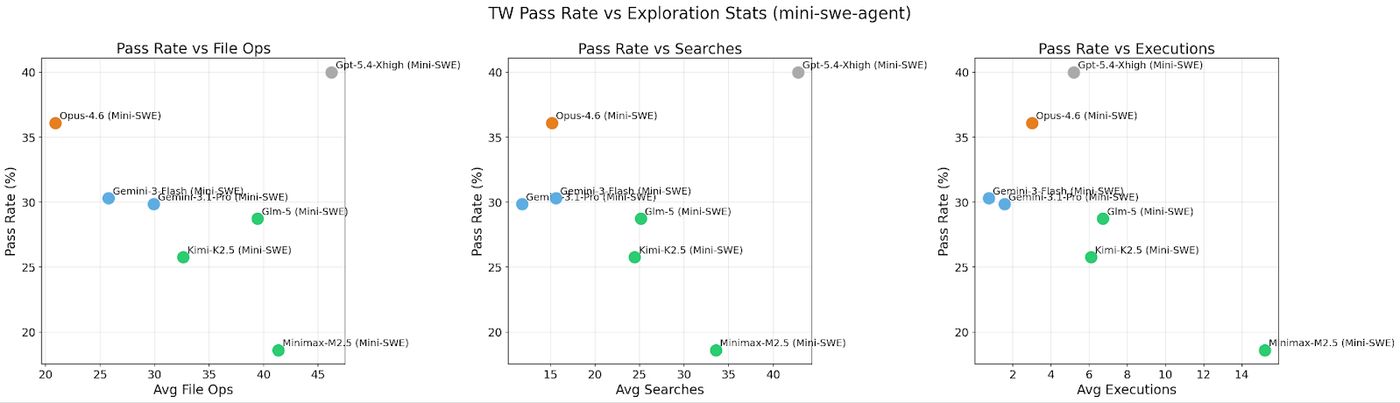

Here we break down the model’s trajectories to gain a better understanding of how different models approach the task under the Mini-SWE-Agent scaffold, whose trajectories consist of a bash command in each step. Since the bash commands issued by models often chain multiple commands together using (&&, ;, etc), we split them into atomic commands and aggregate them into the following 3 categories:

File Ops - Read (cat, head, tail, etc), Write (mkdir, touch, rm, cp, mv, etc)

Searches - Search (grep, rg, ag, find, xargs), navigation (ls, cd, pwd, tree, etc),

Execution - Test runners (pytest, jest, yarn test), Build/run (python, node, make, cargo, bash), Package (pip install, npm install, yarn)

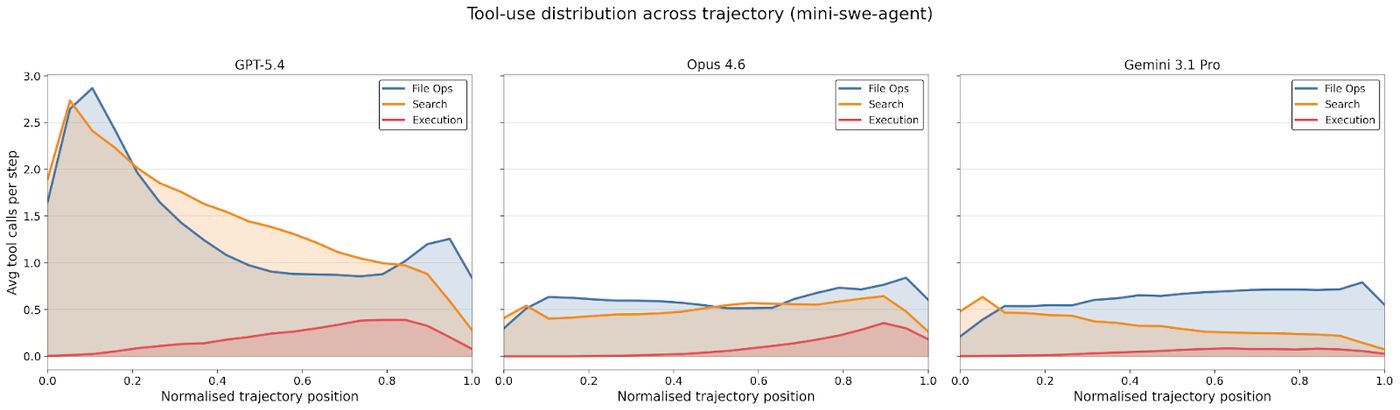

We see that Codebase Search and exploration is weakly correlated with the model’s pass rates, while no clear pattern exists for File Operations and Executions. We further analyzed how these three types of tool calls are temporally distributed over the course of the trajectory, for three models - GPT 5.4, Claude Opus 4.6 and Gemini-3.1 Pro.

We observe that GPT 5.4 xhigh frontloads a lot of codebase exploration, aggressively searching the repository and viewing the repository in the beginning of the trajectory, while the other models do it later. GPT-5.4 and Opus 4.6 also issue a lot more code execution commands at the end of the trajectory to run and test the code and its tests, while Gemini-3.1 Pro doesn’t display the same pattern.

Put together, GPT 5.4’s ability to perform deep exploration of the codebase, active runtime analysis and tendency to write precise tests that target the given behavior in the prompt contribute to its high performance.

Performance Comparison

Gpt-5.4-xHigh (Codex CLI)

44.36±6.04

Gpt-5.5 (Codex)

42.59±5.96

Gpt-5.4-xhigh (Mini-SWE)

40.00±6.00

Gpt-5.3-Codex-Xhigh (Codex)

38.98±6.12

Opus-4.6 (Claude Code)

36.67±6.63

Opus-4.6 (Mini-SWE)

36.08±6.02

Sonnet-4.6 (Claude Code)

31.76±6.24

Muse Spark

31.11±5.76

Gemini-3-Flash (Mini-SWE)

30.30±5.80

Gemini-3.1-Pro (Mini-SWE)

29.84±5.86

Glm-5 (Mini-SWE)

28.74±5.76

Kimi-K2.5 (Mini-SWE)

25.77±5.63

Minimax-M2.5 (Mini-SWE)

18.60±5.20